To my surprise, I found out that GPU (PowerVR SGX 540) in my venerable Nexus S (2010) supports vertex texture fetch (VTF). That is, accessing texture pixels in vertex shader -- a very useful feature for terrain rendering. About a year ago, when I started investigating terrain rendering on Android devices, I did some searching for VTF support on Android devices and figured out it's not there yet (similar to situation years ago when desktop OpenGL 2.0 was released with support for texture sampling in GLSL verter shaders but most GL implementation just reported GL_MAX_VERTEX_TEXTURE_IMAGE_UNITS to be zero). Now I don't know how I missed it on my own phone, maybe there was some Android update with updated GPU drivers during last year? I have no idea how many other devices support it now. Hopefully, newest ones with OpenGL ES 3 support it all. I wouldn't be surprised if among GLES 2 devices only PowerVR + Android 4+ combinations supported it.

Overview

Anyway, let's focus on terrain rendering - rough outline:

- Put the entire heightmap into a texture.

- Have a small 2D grid (say 16x16 or 32x32) geometry ready for rendering terrain tiles.

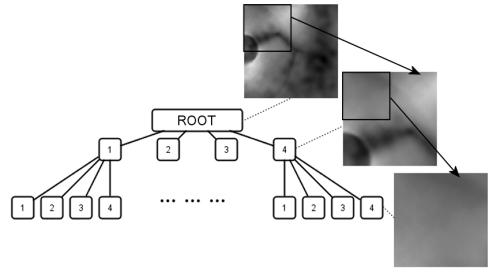

- Build a quad tree over over the terrain. Root node covers entire terrain and each child then covers 1/4th of parent's area.

- Now we can start rendering, do this every frame:

- Traverse the quadtree starting from the root.

- For each child node test if the geometry grid provides sufficient detail for rendering the area covered by this node:

- YES it does, mark this node for rendering and end traversal of this subtree.

- NO it does not, continue traversal and test children of this node (unless we're at leaf already).

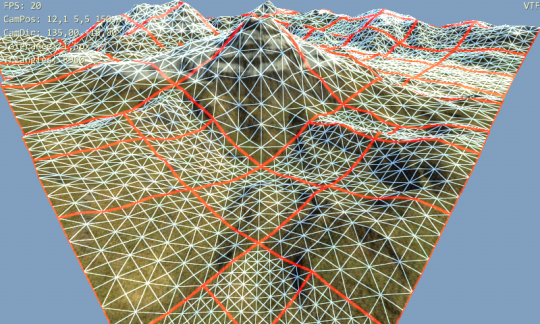

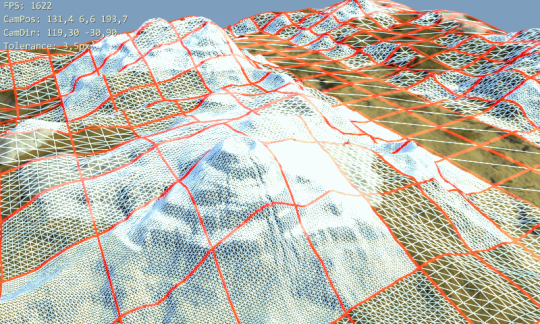

- Now take this list of marked nodes and render them. The same 2D grid is used to render each tile: it's scaled according to tile's covered area and its vertices are displaced by height values read from texture.

This is basically what I originally wanted for Multilevel Geomipmapping years ago but couldn't do in the end because of the state of VTF support on desktop at that time.

So what exactly is the benefit of VTF over let's say geomipmapping here?

Main benefit is ability to get height of the terrain at any position (and multiple times) when processing each tile vertex. In traditional geomipmapping, even if you can move tile vertices around it's no use since you have only one fixed height value available. With VTF, you can modify tile position of vertex as you like and still be able to get correct height value. This greatly simplifies tasks like connecting neighboring tiles with different levels of detail. No ugly skirts or special stitching stripes of geometry are needed as you can simply move edge points around in the vertex shader. Also geomorphing solutions begin to be usable without much work. And you can display larger terrains as well. With geomipmapping you always have to draw fixed number of tiles (visible leaves) -- a number that goes up fast when you enlarge the terrain. VTF may allow you draw fixed number of tiles -- regardless of actual terrain size (as distant tiles cover much larger area compared to geomipmap tiles with fixed area). Another one, terrain normals can be calculated inside the shaders from neighboring height values.

Finally, since heightmap is now a regular texture you get filtering and access to compressed texture formats to stuff more of the terrain to the memory.

There must be some disadvantages, right?

Sure, the support for VTF on mobile GLES 2 GPUs is scarce. So for something else than tech demo it's useless for the time being. Hopefully, all GLES 3 GPUs will support VTF. And with usable performance - VTF was uselessly slow on desktops in the beginning.

Implementation

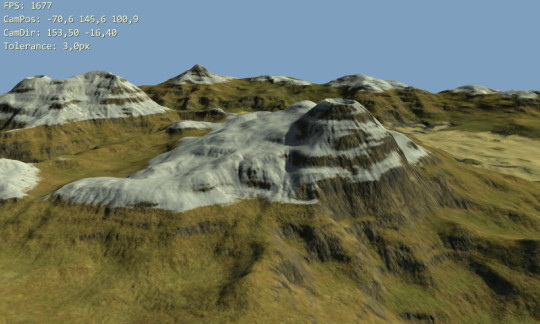

I have added experimental VTF based terrain renderer to Terrain Rendering Demo for Android testbed and it looks promising. Stitching of the tiles works flawlessly. More work is needed on selecting nodes for rendering (render node or split to children?). Currently, there's only simple distance based metric but I want to devise something that takes classical "screen-space error" into account. And maybe some fiddling with geomorphing on top ...

Follow some of the implementation details in part 2 (soon!).